Technology

Everything you need to know about GPU

Published

3 days agoon

What is a graphics processor? Everything you need to know about GPU

The graphics processing unit (GPU) is a specialized electronic circuit for managing and changing memory to accelerate the creation and display of output images on the monitor . The graphic processor consists of a number of basic graphics operators, which in their most basic state are used to draw rectangles, triangles, circles, and arcs, and are much faster than processors in creating images.

-

What is a graphics processor (GPU)?

-

3D image

-

Bitmapped graphics (BMP.)

-

Vector graphics

-

rendering

-

Graphics API

-

What is GDDR?

-

History of 3D graphics

-

How to produce 3D graphics

-

3D modeling

-

Layout and animation

-

rendering

-

Shading technique

-

Pixel shaders

-

Vertex shaders

-

Difference between GPU and CPU

-

Familiarity with GPU architecture

-

Tensor kernels

-

Ray tracing engine

-

What is GPGPU?

-

What is CUDA?

-

Advantages of CUDA kernels

-

Disadvantages of CUDA kernels

-

OpenCL; CUDA replacement

-

CUDA and OpenCL vs. OpenGL

-

OpenCL or CUDA

-

The most prominent brands

-

Intel

-

Nvidia

-

AMD

-

The difference between graphics processor and graphics card

-

Graphics card components

-

Video memory

-

printed circuit board

-

Display connectors

-

bridge

-

Graphic interface

-

Voltage regulator circuit

-

Cooling system

-

Types of graphics processor

-

iGPU

-

dGPU

-

Cloud GPU

-

eGPU

-

Mobile GPU

-

Types of mobile GPUs

-

Other applications of graphics processors

-

Video editing

-

3D graphics rendering

-

Learning the machine

-

Blockchain and digital currency mining

In fact, what we see on the screens is the output of the graphics processors that are used in many systems such as phones, computers, workstations, and game consoles.

What is a graphics processor (GPU)?

If we consider the central processing unit or processor (CPU) as the brain of the computer that manages all calculations and logical instructions, the graphics processing unit or graphics processor (GPU) can be considered as a unit for managing the visual and graphical output of calculations, instructions and information. Related to images, it is known that their parallel structure works more optimally than central processing units or processors for processing large blocks of data; In fact, GPU is considered a graphic interface for converting the calculations made by the processor into a form that is understandable for the user, and it can be safely said that any device that somehow displays graphic output is equipped with some kind of graphics processor.

The graphics processing unit in a computer can be embedded on the graphics card or on the motherboard, or come with the processor on an integrated chip (for example, AMD APUs). It is also possible to identify the graphics card model in Windows with the fastest method, just refer to the linked article and read it.

Integrated chips cannot produce such impressive graphics output, and their output will definitely not satisfy any gamer; To benefit from higher quality visual effects, a separate graphics card (we will learn more about the differences between graphics processor and graphics card) with capabilities beyond a simple graphics processor should be prepared. In the following, we will briefly familiarize ourselves with some basic concepts used in the discussion of graphics.

3D image

An image that has depth in addition to length and width is called a three-dimensional image, which conveys more concepts to the audience and has more information compared to two-dimensional images. For example, if you look at a triangle, you will only see three lines and three angles, but if you have a pyramid-shaped object, you will see a three-dimensional structure consisting of four triangles, five lines, and six angles.

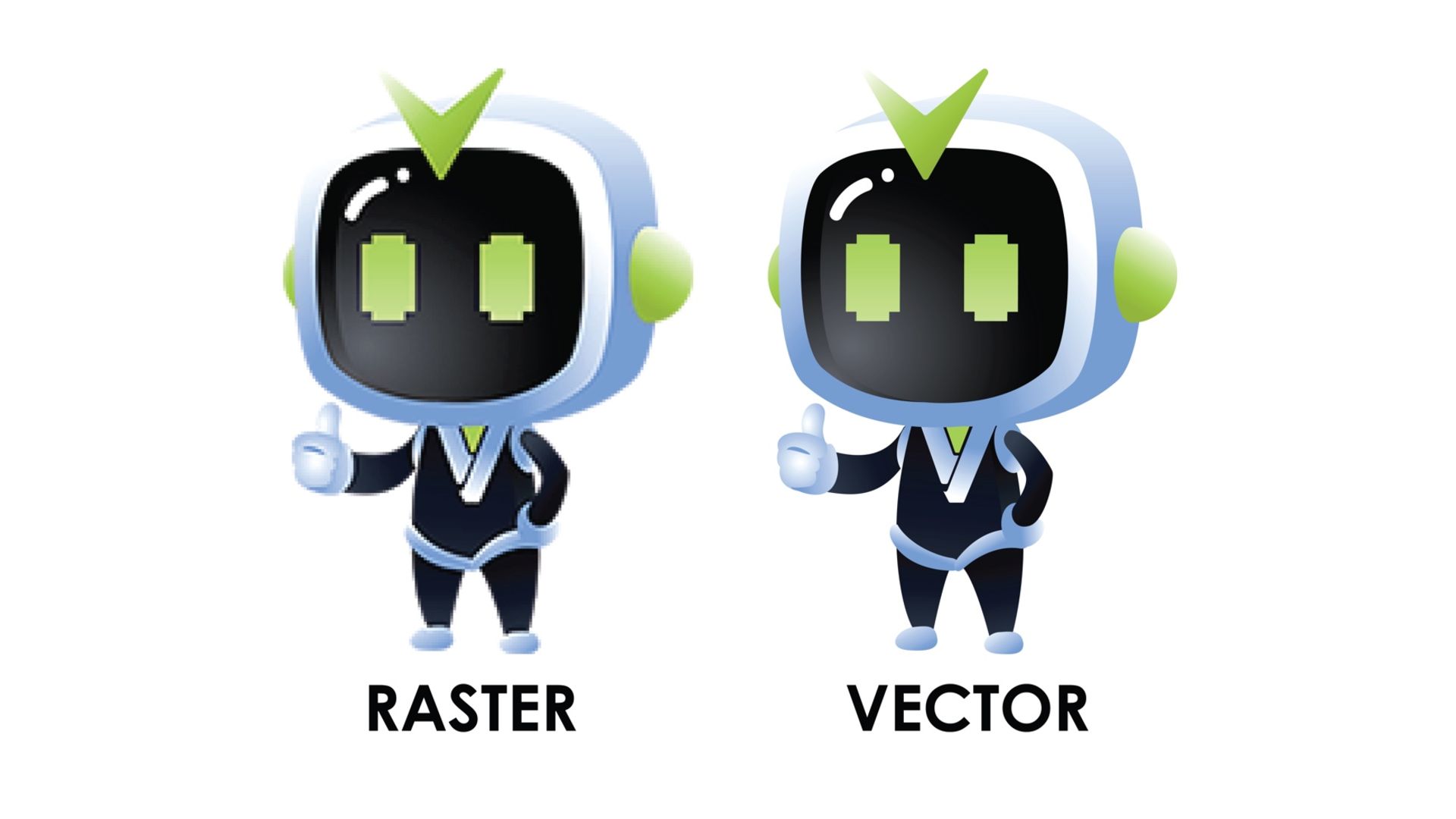

Bitmapped graphics (BMP.)

Bitmapped graphics, or rasterized graphics, is a digital image in which each pixel is represented by a number of bits; This graphic is made by dividing the image into small squares or pixels, each of which contains information such as transparency and color control; Therefore, in raster graphics, each pixel corresponds to a calculated and predetermined value that can be specified with great precision.

The image resolution of raster graphics is dependent on the resolution of the image , which means that the scale of the images produced with this graphic cannot be increased without losing the appearance quality.

Vector graphics

A vector graphic (ai. or eps. or pdf. or svg. formats) is also an image that creates paths with start and endpoints. These routes are all based on mathematical expressions and consist of basic geometric shapes such as lines, polygons, and curves. The main advantage of using vector graphics instead of bitmapped graphics is their ability to scale without losing quality. The scale of the images produced with vector graphics can be easily increased, without loss of quality and as much as the capability of the device that renders them.

As mentioned, unlike vector graphics that are scaled to any size with the help of mathematical formulas, bitmapped graphics lose their quality by scaling . The pixels of a bitmapped graphic must be interpolated when upscaling, which blurs the image and must be resampled when downscaling, which causes loss of image data.

In general, vector graphics are best for creating works of art consisting of geometric shapes, such as logos or digital maps, typefaces, or graphic designs, while raster graphics deal more with real photos and images and are suitable for photographic images.

Vector graphics can be used to make banners or logos; Because with this method, images are displayed in both small and large dimensions with the same quality. One of the most popular programs used to view and create vector images is Adobe Illustrator.

Rendering

The process of producing 3D images from software based on computational models and displaying it as an output on a 2D screen is called rendering.

Graphics API

Software programming interface (Application Programming Interface) or API is a protocol for communication between different parts of computer programs and is considered an important tool for software interaction with graphic hardware; This protocol may be based on the web, operating system, data center, hardware or software libraries. Today, many tools and software have been developed for imaging and rendering of 3D models, and one of the important uses of graphic APIs is to make the process of imaging and rendering easier for developers. In fact, graphics APIs provide virtual access to some platforms for the developers of their graphics applications and their testing. In the following, we introduce some of the most well-known graphic APIs:

OpenGL (short for Open Graphics Library) is a library of various functions for drawing 3D images, which is a cross-platform standard and application programming interface (API) for 2D and 3D graphics and rendering, and a graphics accelerator in video games, design, virtual reality, and other applications. is considered This library has more than 250 different calling functions for drawing 3D images and is designed in two types: Microsoft (often in Windows or graphics card installation software) and Cosmo (for systems that do not have a graphics accelerator).

The OpenGL graphic interface was first designed by Silicon Graphics in 1991 and was released in 1992; The latest version of this API, OpenGL 4.6, was also introduced in July 2017.

A set of application programming interfaces (APIs) developed by Microsoft to enable the communication of instructions with audio and video hardware. Games equipped with DirectX have the ability to use multimedia features and graphics accelerators more efficiently and have improved overall performance.

When Microsoft was preparing to release Windows 95 in late 1994, Alex St. John, a Microsoft employee, researched the development of games compatible with MS-DOS. The programmers of these games often rejected the possibility of porting them to Windows 95 and considered it difficult to develop games for the Windows environment. For this purpose, a three-person team was formed and within four months, this team was able to develop the first set of application programming interfaces (API) called DirectX to solve this problem.

The first version of DirectX was released in September 1995 as the Windows Games SDK, replacing the Win32 DCI and WinG APIs for Windows 3.1. DirectX for Windows 95 and all subsequent versions of Microsoft Windows allowed them to host high-performance multimedia content.

Microsoft offered to John Carmack, the developer of Doom and Doom 2 games, to transfer these two games from MS-DOS to Windows 95 for free with DirectX, in order to increase the acceptance of DirectX by developers. also save the game. Carmack agreed, and the first version of the game, Doom 95, was released in August 1996 as the first game developed on DirectX. DirectX 2.0 became a part of Windows itself with the release of the next version of Windows 95 and Windows NT 4.0 in mid-1996.

Since at that time, Windows 95 was still in its infancy and there were few published games for it, Microsoft began to promote this programming interface extensively and during an event for the first time, Direct3D and DirectPlay were introduced in the online demo of the MechWarrior 2 multiplayer game. Did the DirectX development team face the challenge of testing each version of this programming interface for each set of computer hardware and software, as well as different graphics cards, sound cards, motherboards, processors, inputs, games, and other multimedia applications with each beta version? The final ones were tested and even tests were produced and distributed so that the hardware industry could check the compatibility of their new designs and driver versions with DirectX.

The latest version of DirectX, namely DirectX 12, was unveiled in 2014, and a year later, it was officially released along with Windows 10. This graphics API supports a special multiple adapter and allows the simultaneous use of multiple graphics on a system.

Before DirectX, Microsoft included OpenGL in its Windows NT platform, and now Direct3D was supposed to be an alternative to Microsoft-controlled OpenGL, which was initially focused on gaming. During this time, OpenGL was also developed and it better supported programming techniques for interactive multimedia programs such as games, but since OpenGL was supported by Microsoft’s DirectX team, it gradually withdrew from the competition.

Vulkan

Vulkan is a low-cost, cross-platform graphics API for graphics applications such as gaming and content creation. The distinguishing feature of this graphic API with DirectX and OpenGL is its ability to render 2D graphics and consume less power.

At first, many thought that Vulkan could be the next improved OpenGL and the continuation of its path, but the passage of time has shown that this prediction was not correct. The following table shows the performance differences of these two graphics APIs.

|

OpenGL |

Vulkan |

|---|---|

|

It has only one global state machine |

It is object-based and lacks a global state |

|

state is limited to only one content |

The concept of all states is placed in the command buffer |

|

Functions are only performed sequentially |

It has multi-threaded programming capability |

|

Memory and GPU synchronization are usually hidden |

It is possible to control and manage synchronization and memory |

|

Error checking is done continuously |

Drivers do not perform error checking at runtime. Instead, there is a validation layer for developers. |

Mantle

The Mantle Graphics API is a low-cost interface for rendering 3D video games. It was first developed by AMD and video game developer DICE in 2013. The partnership was intended to compete with Direct3D and OpenGL on home computers, however, Mantle was officially discontinued in 2019 and replaced by the Vulkan graphics API. Mantle could optimally reduce the workload of the processor and eliminate the nodes created in the processing process.

Metal

Metal is Apple’s proprietary graphical interface written in C++ language and first used in iOS 8. Metal can be seen as a combination of OpenGL graphic interface and OpenCL framework, which was designed to simulate the graphic APIs of other platforms such as Vulkan and DirectX 12 for iOS, Mac and tvOS. In 2017, the second version of the Metal graphics API was released with support for macOS High Sierra, iOS 11 and tvOS 11 operating systems. Compared to the previous version, this version was more efficient and optimized.

What is GDDR?

The DDR memory in the graphics processing unit is called GDDR or GPU RAM . DDR (short for Double Data Rate) is an advanced version of Dynamic Simultaneous RAM (SDRAM) and uses the same frequencies as it. The difference between DDR and SDRAM is the number of times data is sent per cycle; DDR transfers data twice per cycle, doubling the memory speed, while SDRAM transfers signals only once per cycle. DDRs quickly became popular because, in addition to twice the transfer speed, they are cheaper than SDRAM and also consume less power than older SDRAM modules.

-

What is DDR5? Everything you need to know about the latest RAM standard [with video]

GDDR was introduced in 2006 for fast rendering on the graphics processor, compared to normal DDR, this memory has a higher frequency and less heat, and it is considered a replacement for VRAM and WRAM, which has been released for 6 generations and each generation is faster and more advanced than the generation is previous

GDDR5 is known as the previous generation of video RAM, and ten years have passed since the introduction of the last current GDDR standard (i.e. GDDR6); GDDR6 with a transfer speed of 16 GB/s (double GDDR5) and a read/write access of 32 bytes (equal to GDDR5) is used in Nvidia’s RTX30 series and AMD’s latest 6000 series graphics cards; GDDR versions do not numerically correspond to DDR, and GDDR5, like GDDR3 and GDDR4, are based on DDR3 technology, and GDDR6 is also based on DDR4 technology; In fact, it can be said that GDDR takes a relatively more independent path than DDR in terms of performance differences.

The main task of the graphics processor is to render images, however, to do this, it needs space to store the information needed to create the completed image, this graphics unit uses RAM (or random access memory) to store data; Data that includes information about each pixel associated with the image, as well as its color and location on the screen. A pixel can be defined as a physical point in a raster image that represents a dot matrix data structure of a rectangular grid of pixels. RAM can also hold completed images until it’s time to display them, which is called a frame buffer.

Before the development of graphics processors, the CPU was responsible for processing images to create output and render them; This would put a lot of pressure on the processors and slow down the system. In fact, the sparks of today’s 3D graphics were lit up with the further development of arcade games, gaming consoles, military, robotics, and space simulators, as well as medical imaging. Rendering and their applications as well as the way of naming were discussed.

In the following and before examining the history of the graphic processing unit, we will introduce the concepts that are relevant in this industry:

History of 3D graphics

The term GPU was first introduced in the 1970s as an abbreviation for Graphic Processor Unit and described a programmable processing unit that had an independent function from the central processing unit or the same processor and was responsible for setting and outputting graphics. ; Of course, at that time this term was not defined as it is today.

In 1981, IBM developed its two graphics cards for the first time, MDA (Monochrome Display Adapter) and CGA (Color Graphics Adapter). The MDA had four kilobytes of video memory and only supported text display; This graphic is no longer used today but may be found on some older systems.

CGA was also considered the first graphics for computers, which was equipped with only sixteen kilobytes of video memory and was capable of producing 16 colors with a resolution of 160 x 200 pixels. A year after this, Hercules Graphics developed the HGC graphics (Hercules Graphics Card) with 64 kilobytes of video memory, which was a combination of MDA with bitmapped graphics, to respond to IBM’s graphics cards.

In 1983, Intel entered the graphics card market with the introduction of the iSBX 275 Video Graphics Multimodule. This card could display eight colors with a resolution of 256 x 256. A year after this, IBM introduced PGC or Professional Graphic Controller and EGA or Enhanced Graphic Adapter graphics that displayed 16 colors with a resolution of 640 x 350 pixels.

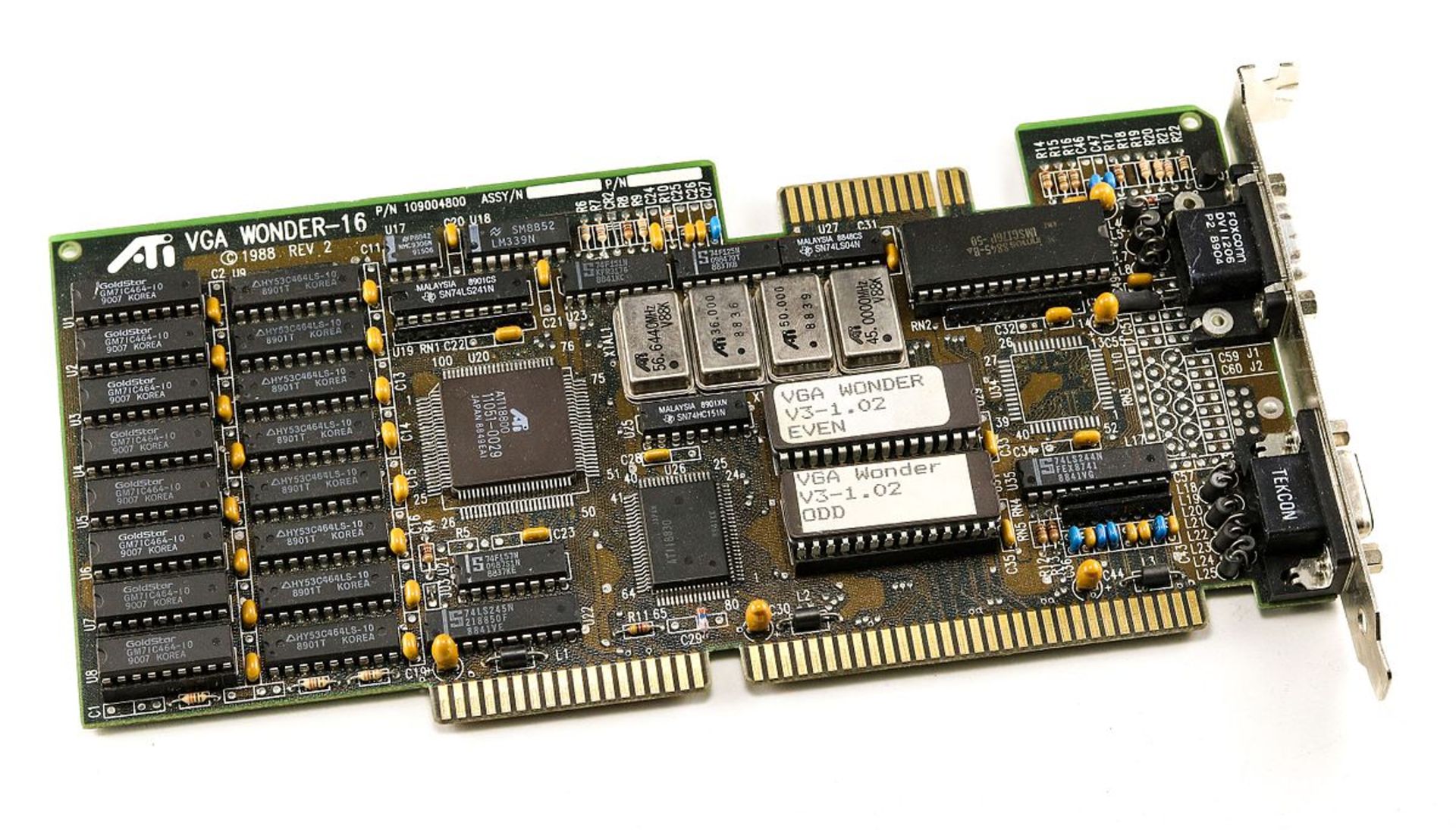

The VGA or Video Graphics Array standard was introduced in 1987, this standard offered a resolution of 640 x 480 with 16 colors and up to 256 kilobytes of video memory. In the same year, ATI introduced its first VGA graphics card called ATI VGA Wonder; Some models of this graphics card were even equipped with a port for connecting a mouse. Until now, video cards had few memories, and processors transferred graphics processing to these video memories and after performing calculations and signal conversion, displayed them on the output device.

After the first 3D video games were released, it was no longer possible to process graphics inputs quickly on processors; In this situation, the basic concept of a graphic processing unit was formed. This concept was initially developed with the introduction of the graphics accelerator; The graphics accelerator was used to boost system performance, perform calculations and graphic processing, and lighten the workload of the processor, and had a significant impact on computer performance, especially intensive graphics processing. In 1992, Silicon Graphics released OpenGL, the first library of various functions for drawing 3D images.

The GPU evolved from the beginning as a complement to the CPU and to lighten the workload of the unit

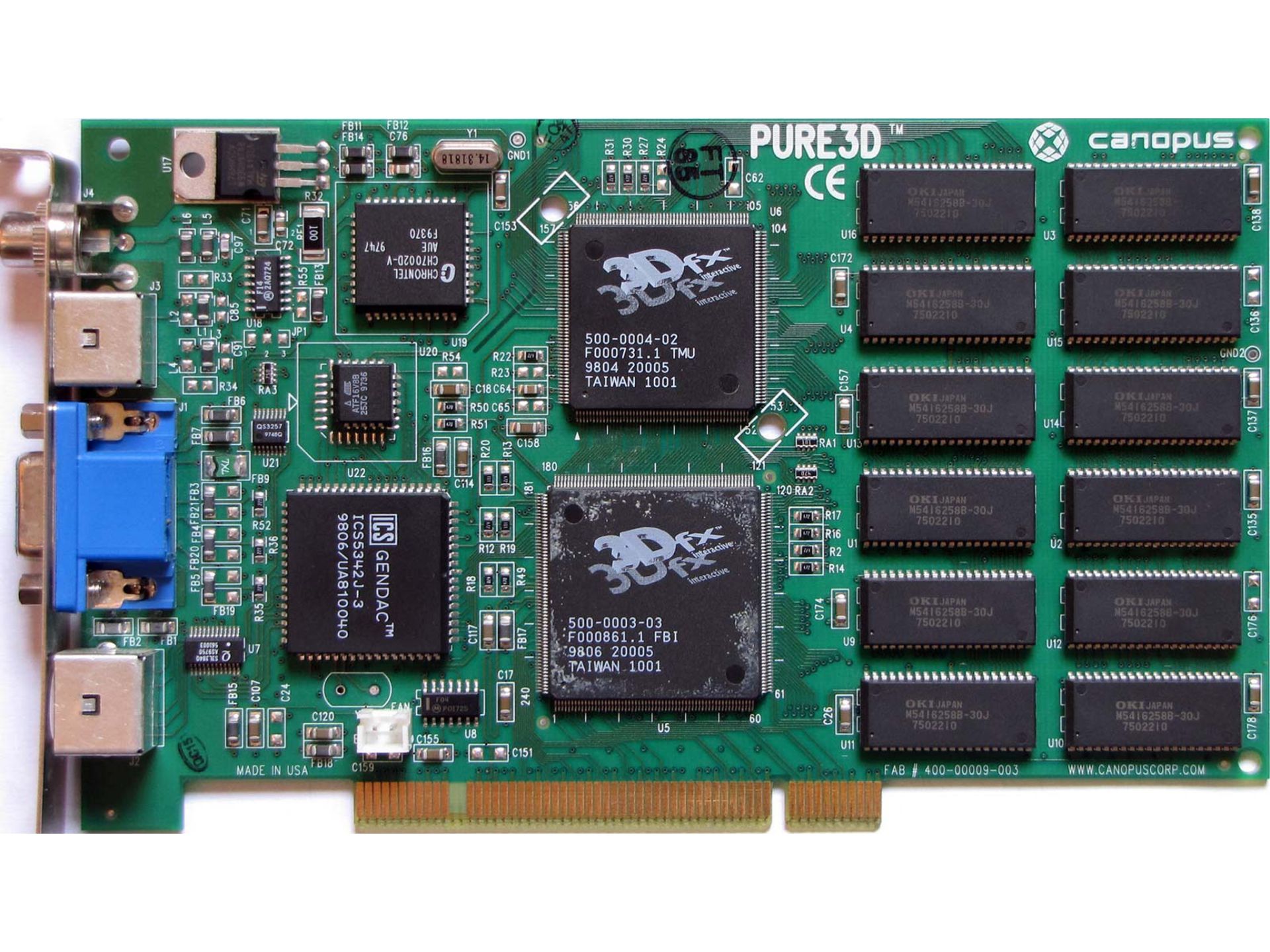

4 years later, Voodoo introduced its first graphics card by a company called 3dfx. This graphics was called Voodoo1 and it required the installation of a 2D graphics card to render 3D graphics, and it quickly became popular among gamers.

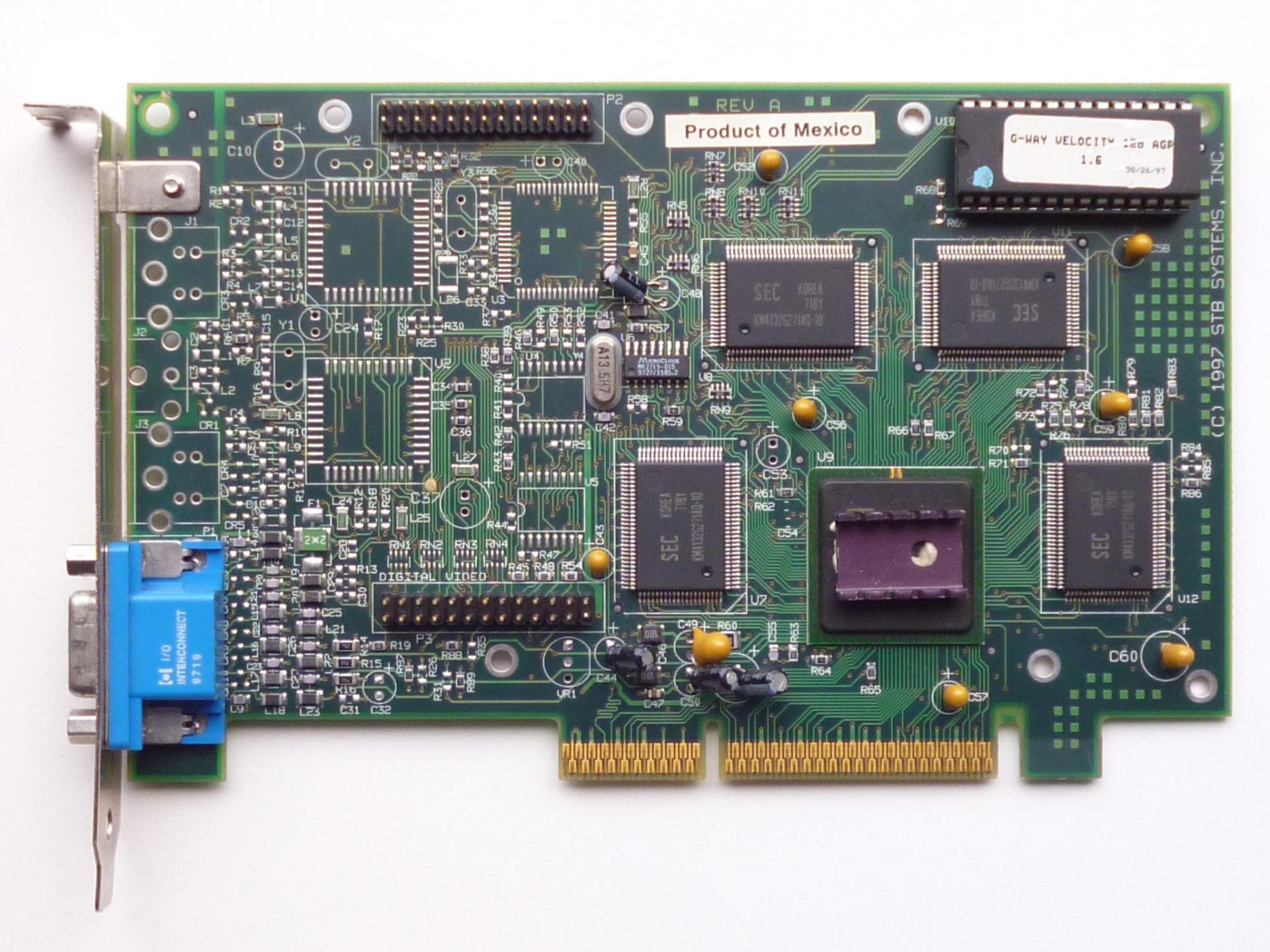

In 1997, in response to Voodoo, Nvidia released the RIVA 128 graphics accelerator. Like the Voodoo1, the RIVA 128 allowed video card manufacturers to use graphics accelerators along with 2D graphics, but compared to the Voodoo1, it had weaker graphics rendering.

After the RIVA 128, 3dfx released the Voodoo2 graphics as a replacement for the Voodoo1. It was the first graphics card to support SLI, allowing two or more graphics to be connected to produce a single output. SLI or Scalable Link Interface is a brand name for an obsolete technology developed by Nvidia for parallel processing and to increase graphics processing power.

The term GPU was popularized in 1999 with the worldwide launch of the GeForce 256 as the world’s first graphics processor by Nvidia. Nvidia introduced this GPU as a single-chip processor with integrated conversion of a 2D view from a 3D scene, lighting and changing the color of surfaces, and the ability to draw parts of the image after rendering. ATI Technologies also released Radeon 9700 graphics in 2002 to compete with Nvidia with the term Visual Processing Unit or VPU.

With the passage of time and the advancement of technology, GPUs were equipped with programmable capabilities, which made Nvidia and ATI enter the competition scene and introduce their first graphics processors (GeForce for Nvidia and Radeon for ATI).

Nvidia officially entered the graphics card market in 1999 with the release of GeForce 256 graphics. This graphics card is known to be the world’s first true GPU that had 32 MB of DDR (same as GDDR) memory and fully supported DirectX 7.

Along with the efforts to speed up the calculations and graphics processing of computers and improve their quality, the companies that produce video games and gaming consoles also tried in some way (Sega with Dreamcast, Sony with PS1, and Nintendo with Nintendo 64) in this field.

How to produce 3D graphics

The process of producing 3D graphics is divided into three main stages:

3D modeling

The process of developing an array based on mathematical coordinates of a physical surface or surface (inanimate or animate) in 3D form is done through specialized software by manipulating sides, vertices, and polygons that are simulated in 3D space.

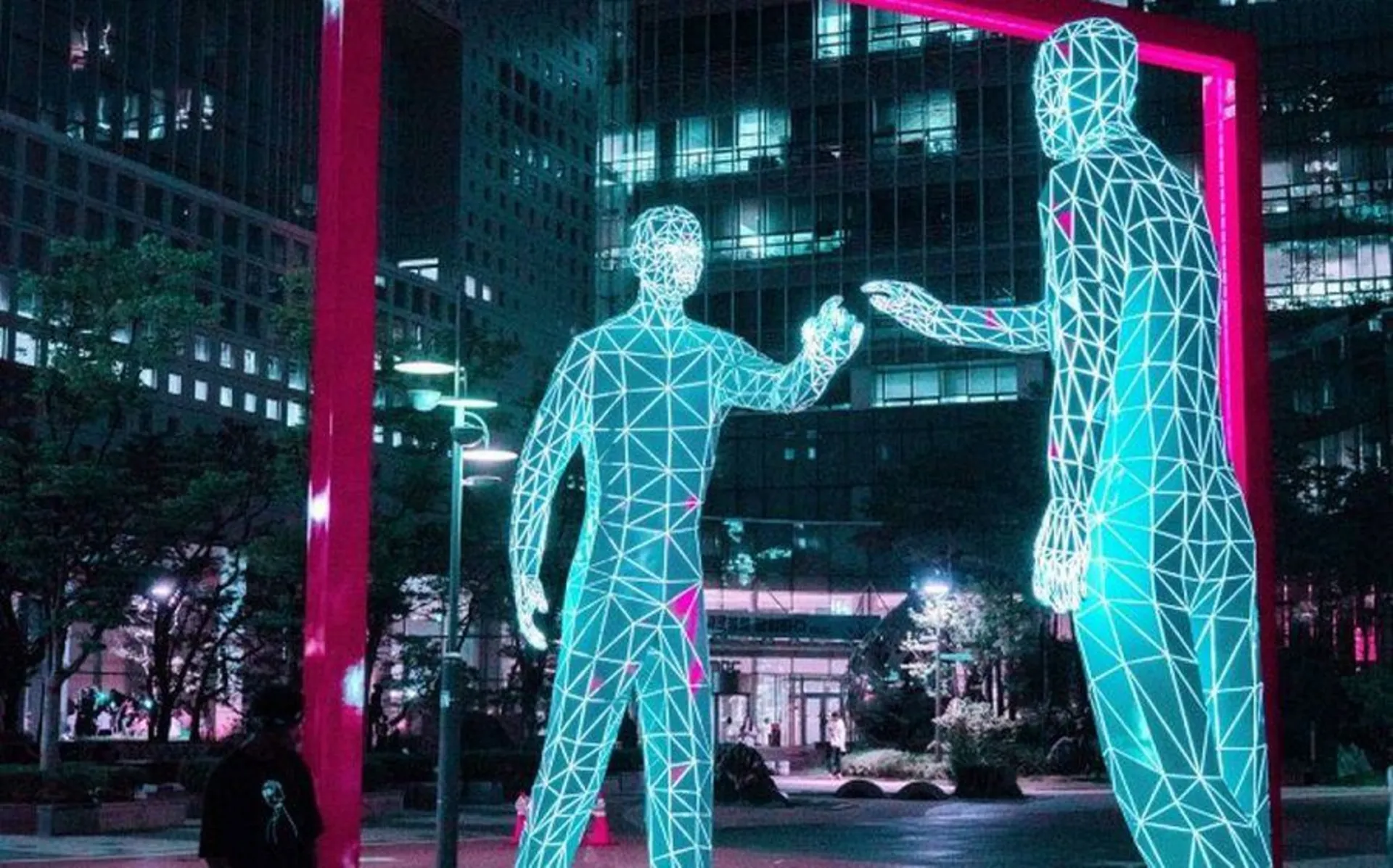

Physical objects are represented using a set of points in three-dimensional space, which are connected by various geometric elements such as triangles, lines, curved surfaces, etc. Basically, 3D models are first created by connecting points and forming polygons. A polygon is an area that consists of at least three vertices (triangles) and the overall integrity of the model and its suitability for use in animation depends on the structure of these polygons.

Three-dimensional models (3D) are made from two methods of polygon modeling (Vertex) and by connecting grid lines of vectors or curve modeling (Pixel) by weighting each point; Today, due to greater flexibility and the possibility of faster rendering of the 3D modeling process in the first method, the vast majority of 3D models are produced in a polygonal and textured way. One of the main tasks of graphics cards is texture mapping (Texture Mapping), which adds texture to an image or 3D model. For example, adding a stone texture to a model makes it look like a real stone image, or adding a texture that resembles a human face to design a face for a scanned 3D model.

In the second method, modeling with weighted control of curved points is obtained, of course, the points are not interpolated, but only curved surfaces can be created using the relative increase of polygons. In this method, increasing the weight for a point brings the curve closer to that point.

Layout and animation

After modeling, it should be determined how to place and determine the movement of objects (models, lights, etc.) in a scene before rendering the objects and creating the image; This means that before the images are rendered, the objects must be designed and arranged in the scene. In fact, by defining the location and size of each object, the spatial relationship between the objects is formed. Motion or animation also refers to the temporal description of an object ( how it moves and changes shape over time ). Common layout and animation methods include keyframing, reverse kinematics, and motion capture. Of course, these techniques are often used in combination.

Rendering

In the last stage, based on the way the light is placed, the types of surfaces, and other specified factors, computer calculations are performed to produce and pay for the image. In this section, materials and textures are the data used for rendering.

The amount of light transmission from one surface to another and the amount of its distribution and interaction on surfaces are two basic actions in rendering that are often implemented using 3D graphics software. In fact, rendering is the final process of creating a 2D image or animation from a 3D model and a ready-made scene with the help of several different and often specialized methods that may take only a fraction of a second or sometimes up to several days for a single image/frame.

Shading technique

After the development of graphics processors to reduce the workload of processors and provide a platform for producing images with much more impressive quality than before, Nvidia and ATI gradually became the main players in the world of computer graphics. These two competitors worked hard to outdo each other and each tried to compete by increasing the number of levels in modeling and rendering and improving techniques. The shading technique can be seen as the birth of their competition.

In the computer graphics industry, shading refers to the process of changing the color of an object/surface/polygon in a 3D scene based on things like its distance from the light, its angle to the light, or the surface’s angle to the light.

Shaders calculate the appropriate levels of light, dark, and color while rendering a 3D scene.

Shading during the rendering process is done by a program called Shader, which calculates the appropriate levels of light, dark, and color during the rendering of a 3D scene. In fact, shaders have evolved to perform a variety of specialized functions in graphic effects, video post-processing, as well as general-purpose computing on GPUs.

Shader changes the color of surfaces in a 3D model based on the angle of the surface to the light source or light sources.

- In the first image that you can see below, all the surfaces of the box are rendered with one color and only the edge lines are marked to make the image better visible.

- The second image shows the same model without the edge lines; In this case, it is a bit difficult to recognize where one face of the box ends and then starts again.

- In the third image, the shading technique has been applied; The final image looks more realistic and the surfaces are easier to recognize.

Shaders are widely used in cinema processing, computer graphics, and video games to produce a wide range of effects. Shaders are simple programs that describe a vertex or pixel. Vertex shaders describe properties such as position, texture coordinates, colors, etc. of each vertex, while pixel shaders describe the color, z-depth, and alpha value properties of each pixel.

There are three types of shaders in common use (pixel, vertex, and geometric shaders). Older graphics cards use separate processing units for each shader, but newer cards are equipped with integrated shaders that can run any technique and provide more optimized processing.

Pixel shaders

Pixel shaders calculate and render the color and other properties of each pixel region. The simplest types of pixel shaders produce only one screen pixel as the output color. In addition to simple lighting models, pixel shaders provide more complex outputs such as color space change, color saturation, brightness (HSL/HSV) or image contrast, blur generation, light bloom, volumetric lighting, normal mapping (for depth effect), bokeh, cell shading, They may also include posterization, bump mapping, distortion, blue screen or green screen effects, edge highlighting and motion, and simulating psychedelic effects.

Of course, in 3D graphics, the pixel shader alone cannot create complex effects, because it only works on one area and does not have access to the information about the vertices, but if the contents of the entire screen are passed to the shader as a texture, these shaders can use the screen and pixels Sample around and enable a wide range of 2D post-processing effects such as blur or edge detection/enhancement for shaders.

Vertex shaders

Vertex shaders are the most common type of 3D shaders and run once on each vertex given to the GPU. The purpose of using these shaders is to convert the three-dimensional position of each vertex in the virtual space into two-dimensional coordinates for display on the monitor. Vertex shaders can manipulate properties such as position coordinates, color, and texture, but cannot create new vertices.

Shaders needed parallelization to perform calculations and render quickly; The concept of crisp or thread was born from here

In 2000, ATI introduced the Radeon R100 series of graphics cards and with this work launched a lasting legacy of the Radeon series of graphics cards. The first Radeon graphics cards were fully compatible with DirectX 7 and used ATI’s HyperZ technology, which actually uses three technologies: Z compression, Z fast cleanup, and Z hierarchical buffering to conserve more bandwidth and improve rendering efficiency.

In 2001, Nvidia released the GeForce 3 graphics card series; This series was the first graphics card in the world to have programmable pixel shaders. Five years after this incident, ATI was bought by AMD, and since then the Radeon series of graphics cards has been sold under the AMD brand. Shader programs needed parallelization for fast calculations and renderings. To solve this problem, Nvidia proposed the concept of crisp or the same thread for graphics processors, which we will explain more about in the following.

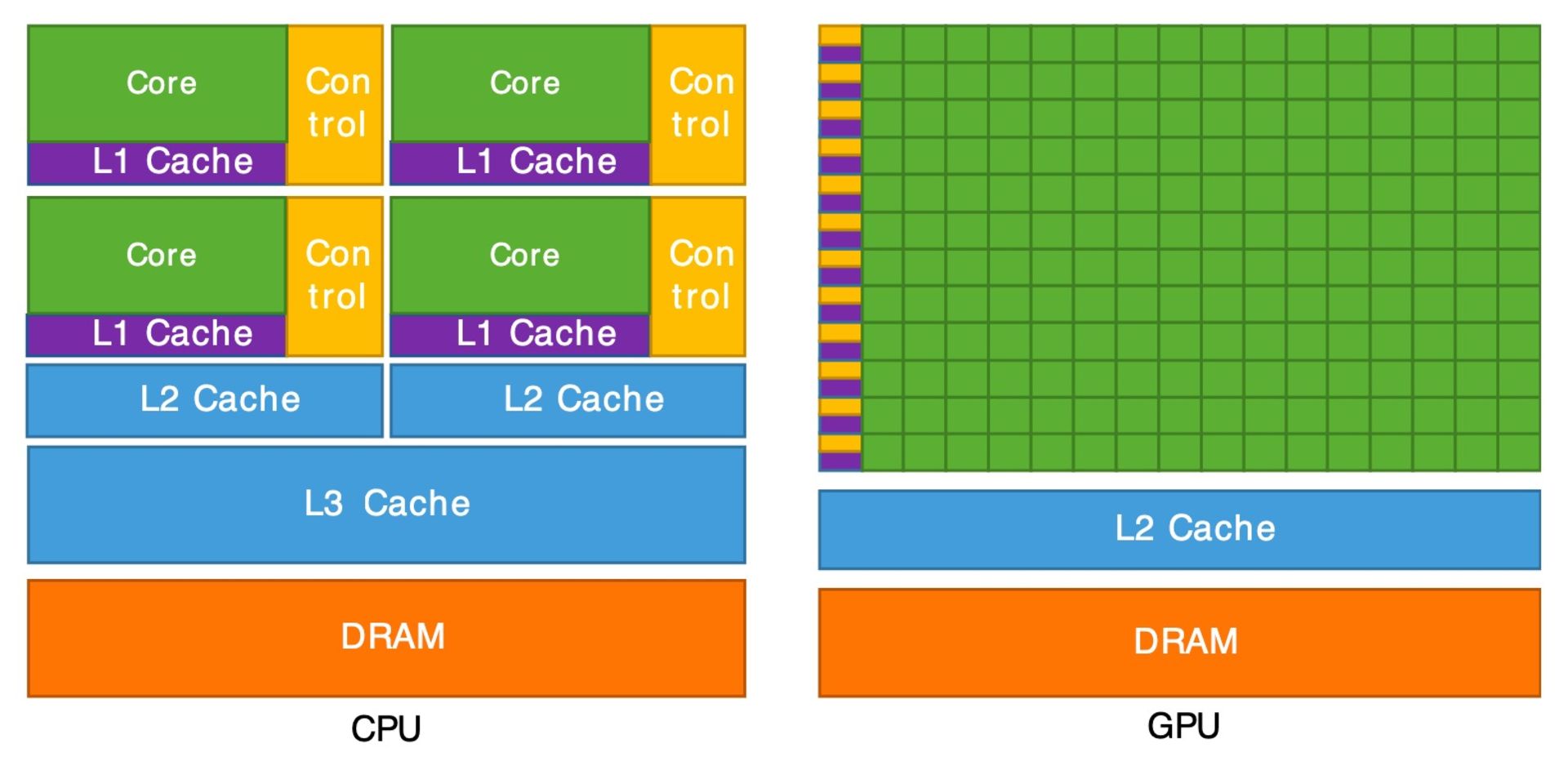

Difference between GPU and CPU

The GPU evolved from the beginning as a complement to the CPU and to lighten the workload of the unit. Today, the performance of processors is becoming more powerful with new achievements in their architecture, increasing the frequency and number of cores, while GPUs are specifically developed to speed up graphics processing.

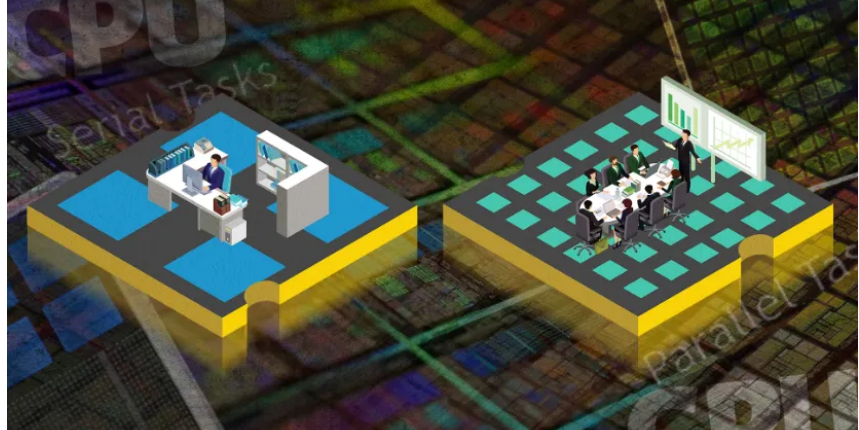

Processors are programmed in such a way that they can switch between operations very quickly in addition to doing one task with the lowest delay and highest speed. In fact, the processing method in CPUs is serial.

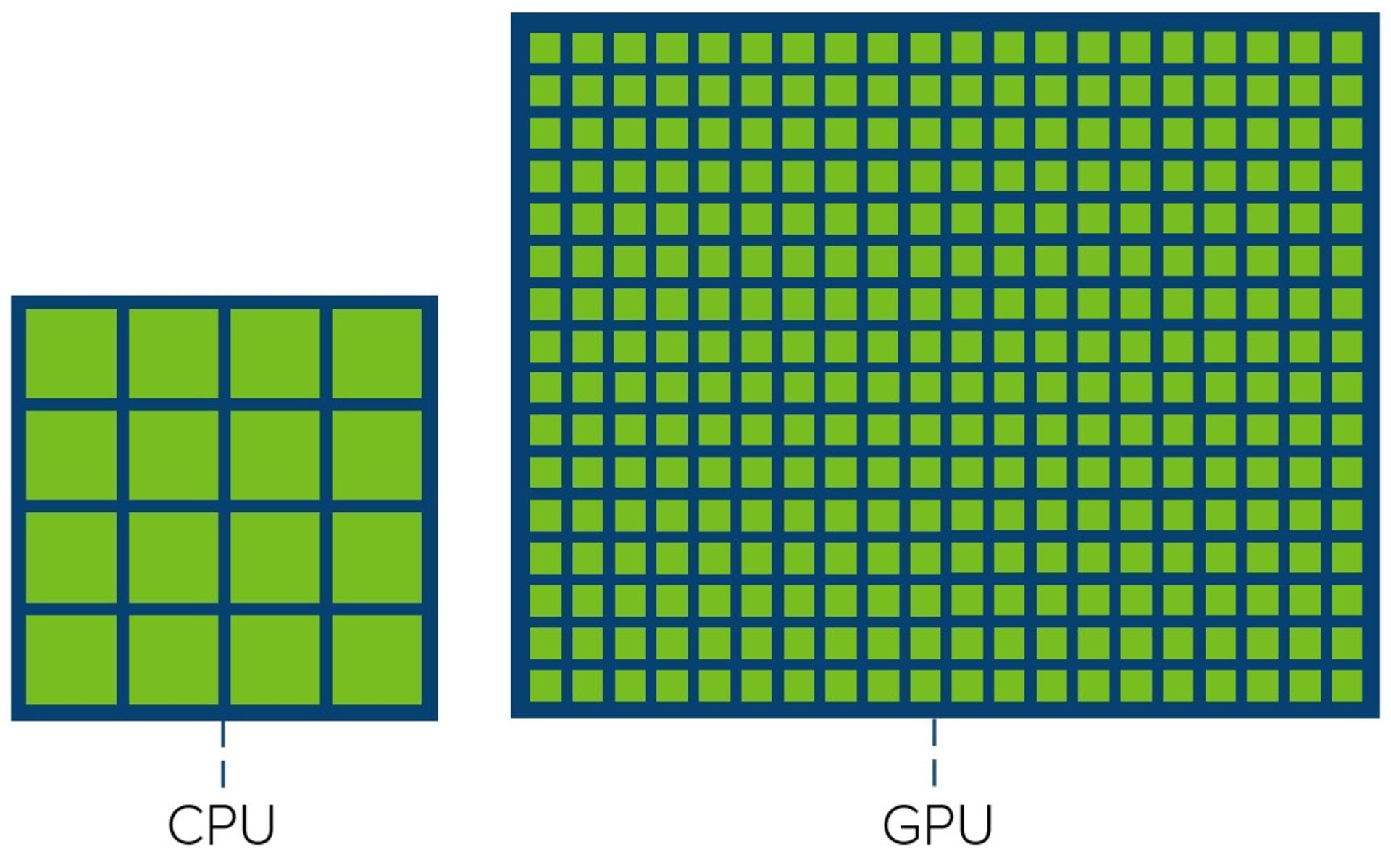

On the other hand, the graphics processor has been specifically developed to optimize the performance of graphics processing and provides the possibility of doing things simultaneously and in parallel. In the image below, you can see the number of cores of a processor and the number of cores of a graphics processor; This image shows that the main difference between CPU and GPU is the number of cores they have to process a task.

From the comparison of the overall architecture of the processors and graphics cards, we can find many similarities between these two units. Both use similar structures in the cache layers and both use a controller for memory and a main RAM. An overview of modern processor architecture suggests that low-latency memory access is the most important factor in processor design, with a focus on memory and cache layers (exact layout depends on vendor and processor model).

Each processor consists of several cache layers:

- Level one cache memory (L1) is the fastest, smallest, and closest memory to the processor and stores the most important data needed for processing.

- The next layer is the level two cache memory (L2) or the external cache memory, which is slower and larger than L1.

- The L3 cache memory in the processor is shared by all cores, and in terms of capacity, it has a larger volume and lower speed than the L1 and L2 cache memory; Like L3, L4 cache has a larger volume and lower speed than L1 and L2; The two are usually used interchangeably. If the data is not located in the cache layers, it is called from the main RAM (DDR).

Looking at the general overview of the GPU architecture (the exact layout depends on the manufacturer and model), we can see that the nature of this unit is focused on running the available cores instead of quickly accessing the cache memory or reducing latency. In fact, the GPU consists of several groups of cores that are located in the level one cache memory.

Compared to the processor, the graphics processor has fewer layers of cache memory and less capacity, this unit is equipped with more transistors dedicated to calculations and cares less about data recovery from memory; The graphics processor is developed with the approach of doing parallel calculations.

High-performance computing is one of the effective and reliable uses of parallel processing to run advanced applications; Precisely for this reason, GPUs are suitable for this kind of calculations.

In simple terms, let’s say you have two options for doing some kind of heavy computation:

- Using a small number of powerful cores that perform processes serially.

- Using a high number of not-so-powerful cores that can perform several processes simultaneously.

In the first scenario, if we lose one of the cores, we will face a serious problem; The performance of the other two cores will be affected and the processing power will be greatly reduced, on the other hand, if we lose a core in the second scenario, there will be no noticeable change in the processing process and the rest of the cores will continue to work.

The GPU performs several tasks at the same time and the CPU performs one task at a very high speed

The bandwidth of the GPU is much higher than the bandwidth of the processor, and therefore it performs parallel processing with high volume much better. The most important issue about graphics processors is that this processing unit is developed for parallel processing, and if the algorithm or calculations are secret and do not have parallelization capabilities, they are not executed at all and cause the system to be slow. CPU cores are more powerful than GPU cores and the bandwidth of this unit is much less than GPU bandwidth.

Familiarity with GPU architecture

At first glance, the CPU has larger but fewer computing units than the GPU. Of course, keep in mind that a core in the processor works faster and smarter than a core in the GPU.

Over time, the frequency of processor cores has gradually increased to improve performance, and on the contrary, the frequency of GPU cores has been reduced to optimize consumption and accommodate installation in phones or other devices.

The ability to perform processes irregularly can be seen as proof of the intelligence of the processor cores. As mentioned, the central processing unit can execute the instructions in a different order than the one defined for it, or predict the instructions needed in the near future and prepare the operands to optimize the system as much as possible and save time, before execution.

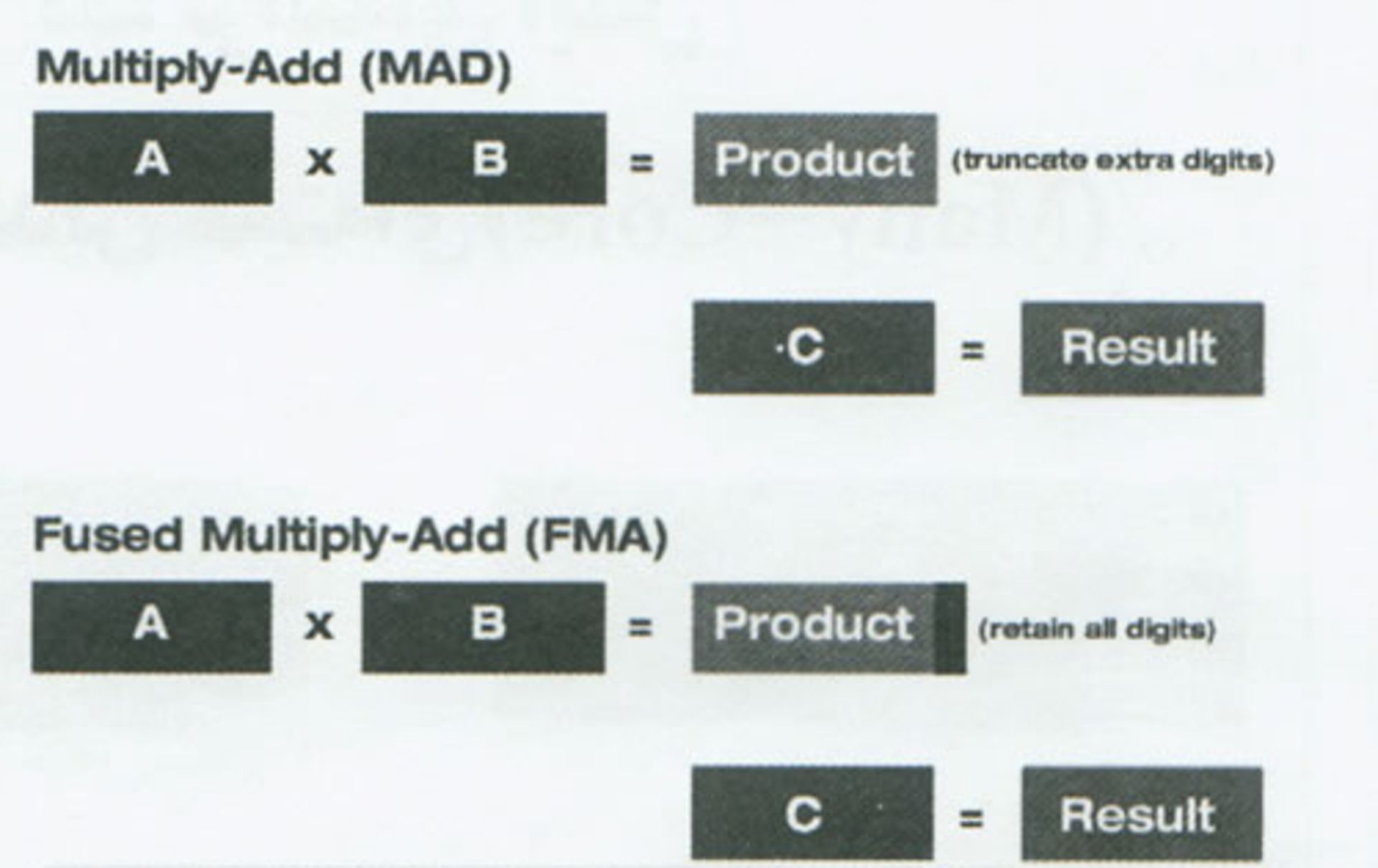

In contrast, the core of a graphics processor is not responsible for complexity and does not do much for processing outside of instructions and programs. In general, the main specialization of GPU cores was to perform floating-point operations such as multiplying two numbers and adding a third number (A x B + C = Result) by rounding the result to an integer, which is called multiply-add or MAD for short. It uses the same result with full accuracy (without truncation) in the multiplication stage, which is called Fused Multiplay-Add or FMA.

The latest GPU microarchitectures today are no longer limited to FMA and perform more complex operations such as ray tracing or tensor kernel processing. Tensor cores and ray tracing cores are also designed to provide hyper-realistic renderings.

Tensor kernels

In 2020, Nvidia produced graphics processors equipped with additional cores that, in addition to shaders, were also used for artificial intelligence, deep learning, and neural network processing. These kernels are called Tensor. Tensor is a mathematical concept whose smallest imaginable unit has zero dimension (zero-by-zero structure) and contains only one value. By increasing the number of dimensions, other tensor structures are:

- One-dimensional tensor: vector (Vector with zero-in-one structure)

- Two-dimensional tensor: matrix (Matrix with one-in-one structure)

Tensor cores fall into the category of SIMD or “single instruction for multiple data” and their use in GPUs provides a much smarter chip than a calculator for graphics by providing all the computing and parallel processing needs. In 2017, Nvidia introduced graphics with a completely new architecture called Volta, which was designed and built targeting professional markets; This graphics card was equipped with cores for tensor calculations, but GeForce graphics processors did not use it.

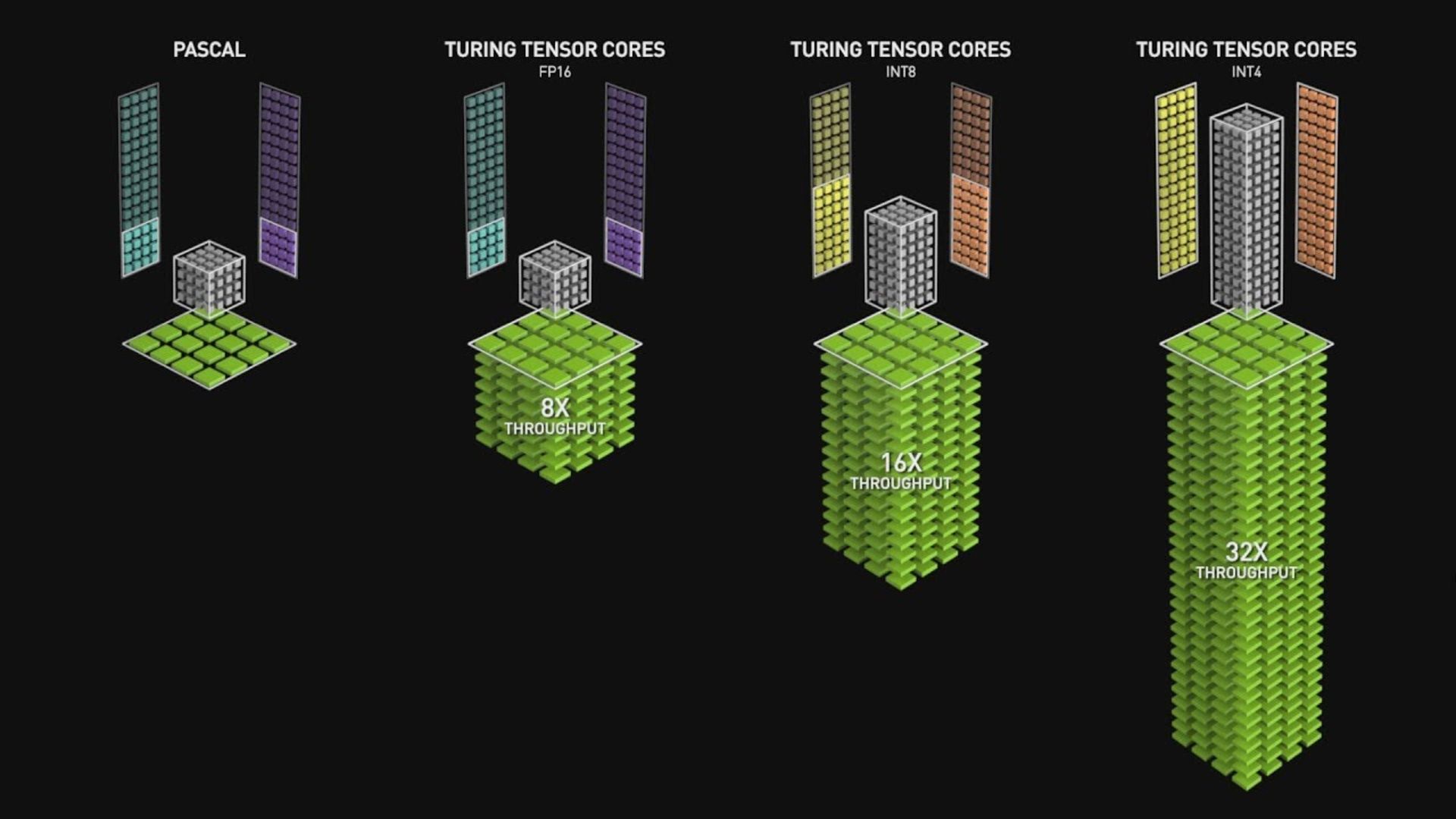

At that time, the tensor cores were capable of multiplying decimal numbers up to 16-bit dimensions (FP16) and addition with 32-bit dimensions (FP32). Less than a year later, Nvidia introduced the Turing architecture; The only difference from the previous architecture was providing support for tensor cores for GeForce GPUs and data formats such as eight-bit integers.

In 2020, Ampere architecture was introduced in A100 graphics processors for data centers; In this architecture, the efficiency and power of cores have increased, the number of operations per cycle has quadrupled, and new data formats have been added to the supported set. Today, tensor cores are specialized and limited pieces of hardware that are used in a small number of consumer-specific graphics. Intel and AMD (the other two players in the world of computer graphics) do not have tensor cores in their GPUs, But maybe they will offer similar technology in the future.

- Tensor kernels are widely used in physics engineering and mathematics: they can perform complex calculations in electromagnetism, astronomy, and fluid mechanics.

- Tensor cores can increase the resolution of images: these cores extract images at a lower graphics level (or lower resolution) and increase the quality of images after rendering.

- Tensor cores increase frame rate: Tensor cores can increase frame rate in games after enabling ray tracing in games.

Ray tracing engine

In addition to cores and cache layers, GPUs may also include hardware to accelerate ray tracing, which simulates a light source shining on objects and creates different zoning in terms of light radiation. Fast ray tracing in video games can display more realistic and high-quality images.

Ray tracing is one of the biggest advancements in recent years in computer graphics and the gaming industry. At first, this feature was only used in the film industry, computer image production, and in animation and visual effects, but today PS5 and XBOX X series gaming consoles also support ray tracing.

In the real world, everything we see is the result of light hitting objects and reflecting it to our eyes; Ray tracing does the same thing in reverse and by identifying the light sources, the path of the light rays, the material, the type of shadow and the amount of reflection when it hits the objects. The ray tracing algorithm displays the reflection of light from objects of different genders in different and more realistic forms, draws the shadow of the objects that are in the path of the light beam depending on whether they are transparent or semi-transparent, and follows the laws of physics. For this reason, the images produced with this feature are very close to reality.

Off beam tracking engine (right side) versus on beam tracking engine (left side)

Nvidia first released ray tracing in 2018 on RTX series graphics under the Turing architecture, and then introduced a new driver that added ray tracing support to some GTX series graphics, which performed less well than the GTX series. They have RTX.

AMD also introduced ray tracing to the PS5 and Xbox XS series consoles by introducing the RDNA 2 architecture. Activating this feature in games reduces the frame rate due to the heavy processing load; For example, if a game runs at 60 fps on a system in normal mode, it may provide only 30 fps with ray tracing.

Frame rate, measured in frames per second (FPS), is a good measure of GPU performance, indicating the number of completed images that can be displayed per second; For comparison, the human eye can process about 25 frames per second, however fast action games need to process at least 60 frames per second to render a game stream smoothly.

What is GPGPU?

Many users abused the ability of parallel and fast processing of graphics processors in some way and transferred processes with the possibility of parallel calculations to this unit without considering the traditional task of the graphics processor. GPGPU or general purpose graphics processor was the solution that Nvidia introduced to solve this problem.

GPGPU (abbreviation of General Purpose Graphics Processing Unit) is the graphics processing unit that also performs non-specialized calculations (or CPU tasks) .

In fact, GPGPUs are used to perform tasks that were previously performed by high-powered CPUs, such as physics calculations, encryption/decryption, scientific computing, and the generation of digital currencies such as Bitcoin. Since GPUs are built for massive parallelism, they can reduce the computational burden on the most powerful processors. That is, the same cores used to shade multiple pixels simultaneously can similarly process multiple data streams simultaneously. Of course, these cores are not as complex as processor cores.

The GeForce 3 was Nvidia’s first GPU to feature programmable shaders. At the time, programmers aimed to make rasterized or bitmapped 3D graphics more realistic, and this Nvidia GPU provided capabilities such as 3D transformation, roughness mapping, and lighting calculations.

After the GeForce 3, the ATI 9700 GPU was introduced, equipped with DirectX 9, with more programming capabilities like the CPUs. With the introduction of Windows Vista, along with DirectX 10, integrated shader cores became standard. This newly discovered capability of GPUs enabled more CPU-based computing.

Since the release of DirectX 10, which featured integrated shaders for Windows Vista, more focus has been placed on GPGPUs, and higher-level languages have been developed to facilitate programming for computations on GPUs. AMD and Nvidia both had approaches to GPGPU development with programming interfaces (open source OpenCL and Nvidia’s CUDA).

What is CUDA?

Simply put, CUDA allows programs to use the GPU as a sub-processor. The processor transfers certain tasks to the graphics equipped with the CUDA core, which is optimized to process and calculate things like lighting, motion, and interaction as quickly as possible, and even performs processing from multiple paths simultaneously when necessary. The processed data is then sent back to the processor, which uses it for larger and more important calculations.

Advantages of CUDA kernels

Computer systems are based on software, so most of the processing must be programmed in program code, and since the main function of CUDA lies in calculation, data generation, and image manipulation, using CUDA cores helps programmers save time processing effects, rendering, and Reduce outputs to a high degree, especially in changes of scales, as well as simulations such as fluid dynamics and forecasting processes. CUDA also works great in light sources and ray tracing, and functions like rendering effects, encoding, video conversion, etc. are processed much faster with its help.

CUDA is designed to work with programming languages such as C, C++, and Fortran, making it easier for experts in parallel programming to use the GPU. In contrast, previous APIs such as Direct3D and OpenGL required advanced skills in graphics programming.

This design is more efficient than CPUs for parallel processing of large blocks of data, as in the following examples:

- Cryptographic hash functions

- machine learning

- Molecular dynamics simulation

- Physics engines

- Sorting algorithms

- Programming skills

Disadvantages of CUDA kernels

CUDA is Nvidia’s proprietary approach to introducing a GPU-like graphics processor (GPGPU), so you should only use Nvidia’s products to take advantage of it. For example, if you have a Mac Pro, you cannot use the capabilities of CUDA kernels, because this device uses AMD graphics for graphics processing; Additionally, fewer applications support CUDA than its alternative.

OpenCL; CUDA replacement

OpenCL is a relatively new, text-based system that is considered a replacement for CUDA. Anyone can use the functionality of this standard in their hardware or software without paying for the technology or a proprietary license. CUDA uses the graphics as a co-processor, while OpenCL transfers data entirely and uses the graphics more as a discrete processor. This difference in the way graphics are used may not be accurately measured, but another measurable difference between these two standards can be seen as the difficulty of coding for OpenCL compared to CUDA; As a user, you are not tied to any vendor and support is so extensive that most apps don’t even mention accepting it.

CUDA and OpenCL vs. OpenGL

As mentioned before, OpenGL can be seen as the beginning of the story of this competition; Of course, the purpose of developing this programming interface is not to use graphics as a general-purpose processor, and instead it is simply used to draw pixels or vertices on the screen. OpenGL is a system that allows graphics to render 2D and 3D images much faster than a CPU. Just as CUDA and OpenCL are alternatives to each other, OpenGL is an alternative to systems like DirectX on Windows.

Simply put, OpenGL renders images very quickly, and OpenCL and CUDA handle the necessary calculations when videos interact with effects and other media; OpenGL may place content in the editing interface and render it, but when it comes to color correction for this content, CUDA or OpenCL will do the math to change the pixels. Both OpenCL and CUDA can use the OpenGL system, and a graphics-equipped system with the latest OpenGL support will always be faster than a computer with a CPU and integrated graphics.

OpenCL or CUDA

The main difference between CUDA and OpenCL is the specificity of the CUDA framework, which was created by Nvidia and is open source compared to OpenCL. Assuming that the software and system hardware support both options, it is recommended to use CUDA if you have Nvidia graphics; This standard works faster than OpenCL in most cases. In addition, Nvidia graphics also support OpenCL, although the productivity of AMD graphics is higher than OpenCL. The choice between CUDA or OpenCL depends on the needs of the individual, the type of work, the type of system the workload, and its performance.

For example, Adobe explains on its website that, with very few exceptions, everything that CUDA does for Premiere Pro can also be done by OpenCL. However, most users who have compared these two standards believe that CUDA is faster than Adobe products.

The most prominent brands

In the graphics processor market, AMD and Nvidia are well-known names. The former used to be ATI and originally launched under the Radeon brand name for its GPUs in 1985; Then Nvidia became known as ATI’s competitor with the release of its first graphics processor in 1999. AMD bought ATI in 2006 and now competes with Nvidia and Intel on two different fronts. In fact, personal taste and brand loyalty are the most important factors that differentiate AMD and Nvidia.

Nvidia recently released GTX 10 series graphics, but AMD’s equivalents are generally more affordable choices. Other competitors such as Intel are also in the game and implementing their graphics solutions on the chip, but currently, AMD and Nvidia can be identified as the most prominent brands in this field. The processing speed of Nvidia graphics is lower than AMD graphics. Nvidia graphics with more cores and higher frequencies are suitable for gaming, but since they have a lower cache, they cannot reach AMD processors for performing some parallel processing such as mining and extracting digital currencies. In the following, we will briefly introduce the three leading brands in the world of graphics and graphic architecture, and in the near future, we will examine these competitors, their architectures, and products in detail in a separate article.

Intel

Intel is one of the largest manufacturers of computer equipment in the world, which operates in the field of hardware production, various types of microprocessors, semiconductors, integrated circuits, processors, and graphics processors. AMD and Nvidia are two prominent competitors of Intel and each has its own fans. Intel’s first attempt at a dedicated graphics card was the Intel740, which was released in 1998, but failed due to poorer performance than market expectations, forcing Intel to stop developing discrete graphics products. However, this graphics technology survived in the Intel Extreme Graphics product line. After this failed attempt, Intel tried its luck in the world of graphics once again in 2009 with the Larrabee architecture. This time, the previously developed technology was used in the Xeon Phi architecture.

In April 2018, news broke that Intel was assembling a team to develop discrete graphics processing units aimed at both the data center and gaming markets, bringing in Raja Kodori, former head of AMD’s Radeon Technologies Group. Intel announced early that it plans to introduce a discrete GPU in 2020. The first Xe discrete GPU, codenamed DG1, was released in October 2019 as a test drive and was expected to be used as a GPGPU for data center and self-driving applications. This product was initially made with 10nm lithography and then in 2021 with 7nm lithography and used 3D stacking packaging technology (Intel’s Foveros molding).

Intel Xe, or Xe for short, is the name of Intel’s graphics architecture, which has been used in Intel processors since the 12th generation. This company has also started developing discrete graphics and desktop graphics cards based on the Xe architecture and the Arc Alchemist brand. Xe is a family of architectures, each of which has significant differences from the others and consists of Xe-LP, Xe-HP, Xe-HPC, and Xe-HPG microarchitectures.

Unlike previous Intel GPUs that used execution units (EU) as the computing unit, Xe-HPG and Xe-HPC use Xe cores. Xe cores have vector and matrix computing logic units, and they are called vector and matrix engines, in addition to these units, they are also equipped with L1 cache memory and other hardware.

- Xe-LP (low power): Xe-LP is a low-power variant of the Xe architecture and is used as integrated graphics in 11th-generation Intel Core processors, Iris Xe MAX mobile discrete GPUs (codenamed DG1), and H3C XG310 server GPUs ( are used with the code name SG1). This series of graphics processors offers more processing frequency with the same voltage as the previous generation. In its largest configuration, Xe-LP has 50% more execution units (EU) than the 64 execution units of the 11th-generation graphics architecture in the Icelake series, and therefore its computing resources have been significantly increased. Along with the 50% increase in execution units, Intel has improved the architecture of Xe-LP graphics processors and instead of two calculation and logic units (ALU) with four paths in the previous generation, it uses eight paths for each calculation and logic unit. In addition, in the Xe LP architecture, a level 1 cache is also added, which reduces the delay in sending data, and supports end-to-end data compression, which increases bandwidth and performs tasks such as game streaming. It speeds up video chat recording, etc.

- Xe-HP (High Performance): Xe-HP is a high-performance, datacenter graphics optimized for FP64 performance and multi-tile scalability.

- Xe-HPC (High-Performance Computing): Xe-HPC is the high-performance computing variant of the Xe architecture. Each core in Xe-HPC includes 8 vector engines and 8 matrix engines, along with a large 512KB L1 cache.

- Xe-HPG (High-Performance Graphics): Xe-HPG is a high-performance graphics variant of the Xe architecture that uses the Xe-LP-based microarchitecture with improvements to Xe-HP and Xe-HPC. Xe-HPG has always been focused on graphics performance and supports hardware-accelerated ray tracing, DisplayPort 2.0, neural network-based supersampling (XeSS) similar to Nvidia’s DLSS, and DirectX 12 Ultimate. Each Xe-HPG core contains 16 vector engines and 16 matrix engines.

Nvidia

Nvidia was founded in 1993 and is one of the main manufacturers of graphics cards and graphics processors (GPU). Nvidia produces different types of graphics units, each offering unique capabilities. In the following, we briefly introduce the micro-architectures of Nvidia graphics units and the improvements of each compared to the previous generation:

- Kelvin: The Kelvin microarchitecture was released in 2001 and was used in the GPU of the original Xbox game console. GeForce 3 and GeForce 4 series graphics units were released with this microarchitecture.

- Rankine: Nvidia introduced the Rankine microarchitecture in 2003 as an improved version of the Kelvin microarchitecture. This microarchitecture was used in the GeForce 5 graphics series. The video memory capacity in this microarchitecture was 256 MB and it supported vertex and fragment shading programs. Vertex shaders change the geometry of the scene and create a 3D layout. Fragment shaders also specify the color of each pixel in the rendering process.

- Curie: Curie, a microarchitecture used in GeForce 6 and 7 series graphics, was released in 2004 as a successor to Rankine. The video memory capacity in Corey was 512 MB and it was the first generation of Nvidia graphics processors that supported PureVideo video decoding.

- Tesla: The Tesla graphics microarchitecture was introduced in 2006 and made several significant changes to Nvidia’s GPU lineup. In addition to being used in GeForce 8, 9, 100, 200, and 300 series graphics units, the Tesla architecture is also used in Quadro graphics products for things other than graphics processing. In 2020, after Elon Musk introduced the Tesla electric car, Nvidia stopped using the Tesla name to avoid further confusion.

- Fermi: Fermi was released in 2010 and offered features such as support for 512 CUDA cores, L1 cache /shared memory partitioning, 64KB capacity for RAM, and error correcting code (ECC) support. Some GeForce 8, GeForce 500, and GeForce 400 series graphics cards were produced based on this microarchitecture.

- Kepler: Kepler’s graphical microarchitecture was introduced after Fermi in 2012 and came with key improvements over the previous generation. This micro-architecture was equipped with new execution cores with simultaneous processing capability (SMX) and supported TXAA (anti-aliasing method). In the anti-aliasing technique in computer video, the information of the past frames and the current frame are combined to remove the unevenness in the current frame, and each pixel is sampled once in each frame, but in each frame, the sample is located at a different location in the pixel. Pixels sampled in past frames are combined with pixels sampled in the current frame to create a better-quality image. The Kepler microarchitecture consumes less power and the number of CUDA cores in it has increased to 1536. This micro-architecture is capable of automatic overclocking by enhancing the graphics processor and is equipped with the GPUDirect feature, which enables the communication of graphics units without the need to access the processor. Nvidia has used this micro-architecture in some GeForce 600, GeForce 700, and GeForce 800M series graphics units.

- Maxwell: Maxwell microarchitecture was released in 2014 and the first generation of GPUs based on this microarchitecture compared to Fermi, more efficient processors as a result of improvements related to logical partitioning control, reduction of dynamic power dissipation by eliminating frequency when the circuit is not in use. Scheduling of instructions and workload balancing, 64 KB of dedicated shared memory for each execution unit, improved performance with the help of native shared memory, and support for dynamic work parallelism. Some of the GeForce 700, GeForce 800M, GeForce 900, and Quadro Mxxx series graphics units were released with the Maxwell microarchitecture.

- Pascal: Pascal replaced the Maxwell microarchitecture in 2016. Graphics based on this microarchitecture ( GeForce 10 series ) compared to the previous generation of improvements such as NVLink communication support, for higher speed than the PCIe interface, High Bandwidth 2 (HBM2) memory equal to 720 GB, preemption processing capability ) or rollback (which by creating a temporary interruption in a running process, another process with a higher priority) and active balancing are used to optimize the use of GPU resources.

- Volta: Volta was a unique microarchitectural iteration released in 2017. Before Volta, most previous Nvidia GPU microarchitectures were developed for general use, but Volta GPUs were perfectly suited for professional applications; In addition, Tensor Cores were also used for the first time in this micro-architecture. As mentioned earlier, tensor cores are a new type of processing cores that perform specialized mathematical calculations and matrix operations and are specifically used in artificial intelligence and deep learning. Tesla V100, Tesla V100S, Titan V and Quadro GV100 series graphics units are developed based on the Volta microarchitecture.

- Turing: The Turing microarchitecture was introduced in 2018 and, in addition to supporting tensor cores, it also featured a number of consumer-focused GPUs. Nvidia uses this microarchitecture in its Quadro RTX and GeForce RTX series graphics processors. Turing supports RTX (Real-Time Ray Tracing) and is used for heavy calculations such as virtual reality (VR). Nvidia has used this microarchitecture for its GeForce 16, GeForce 20, Quadro RTX, and Tesla T4 graphics units.

- Ampere: Ampere is Nvidia’s newest microarchitecture, mostly used for high-performance computing (HPC) and artificial intelligence applications. The cores in this micro-architecture are tensor-type and support the third-generation NVLink interface, structural dispersion capability (turning unnecessary parameters to zero to enable the training of artificial intelligence models), second-generation ray tracing, MIG capability (abbreviation for Multi-Instance GPU) for active Separate partitioning and performance optimization of CUDA cores are equipped. Nvidia GeForce 30 series graphics units, workstations, and data centers are developed based on this microarchitecture.

In general, Turing may be considered Nvidia’s most popular microarchitecture, because Turing’s combined ray tracing and rendering capabilities create impressive 3D animations and realistic images that are very similar to reality. According to Nvidia, Real-Time Ray Tracing in graphics units based on Turing microarchitecture can calculate a billion rays per second to create graphics images.

AMD

AMD (abbreviation for Advanced Micro Devices) was founded in 1969 and now operates as a prominent competitor to Nvidia and Intel in the field of producing processors and graphics processors. After buying ATI in 2006, this company developed the products of this brand under its brand name. AMD graphics units are produced in several different series:

- Radeon series: common and common series that are the same ATI souvenirs.

- Mobility Radeon Series: Includes AMD’s low-power graphics, mostly used in laptops.

- Fire Pro series: powerful AMD graphics designed for workstations.

- Radeon Pro series: They are known as the new generation of Fire Pro graphics.

AMD graphics used to be numbered with four digits: Radeon HD 7750.

In these graphics, the bigger the model number, the stronger and newer the graphics. For example, HD 8770 graphics are stronger and more up-to-date than HD 8750; Of course, this does not apply to different generations. This means that graphics from the 7th generation cannot necessarily be compared with the graphics from the 8th generation without checking and only based on the generation number and consider it weaker. AMD changed the naming process of its products after the Radeon RX 5700 series graphics; RX 5700 XT and RX 5700 are the first graphics cards that were launched with AMD’s new naming scheme. AMD graphics units currently come in three general categories: the R5 series, the R7 series, and the R9 series.

- R5 and R6 series: low-end and relatively weak AMD graphics.

- R7 and R8 series: AMD’s mid-range graphics are suitable for editing in programs such as Photoshop and After Effects.

- R9 series: AMD’s most powerful graphics belong to this family and provide acceptable performance for gaming; As far as some R9 series graphics are designed for virtual reality or VR devices.

Currently, the only active extension for AMD graphics units is the XT extension, which indicates the high-end, better performance and higher frequency of that product.

The difference between graphics processor and graphics card

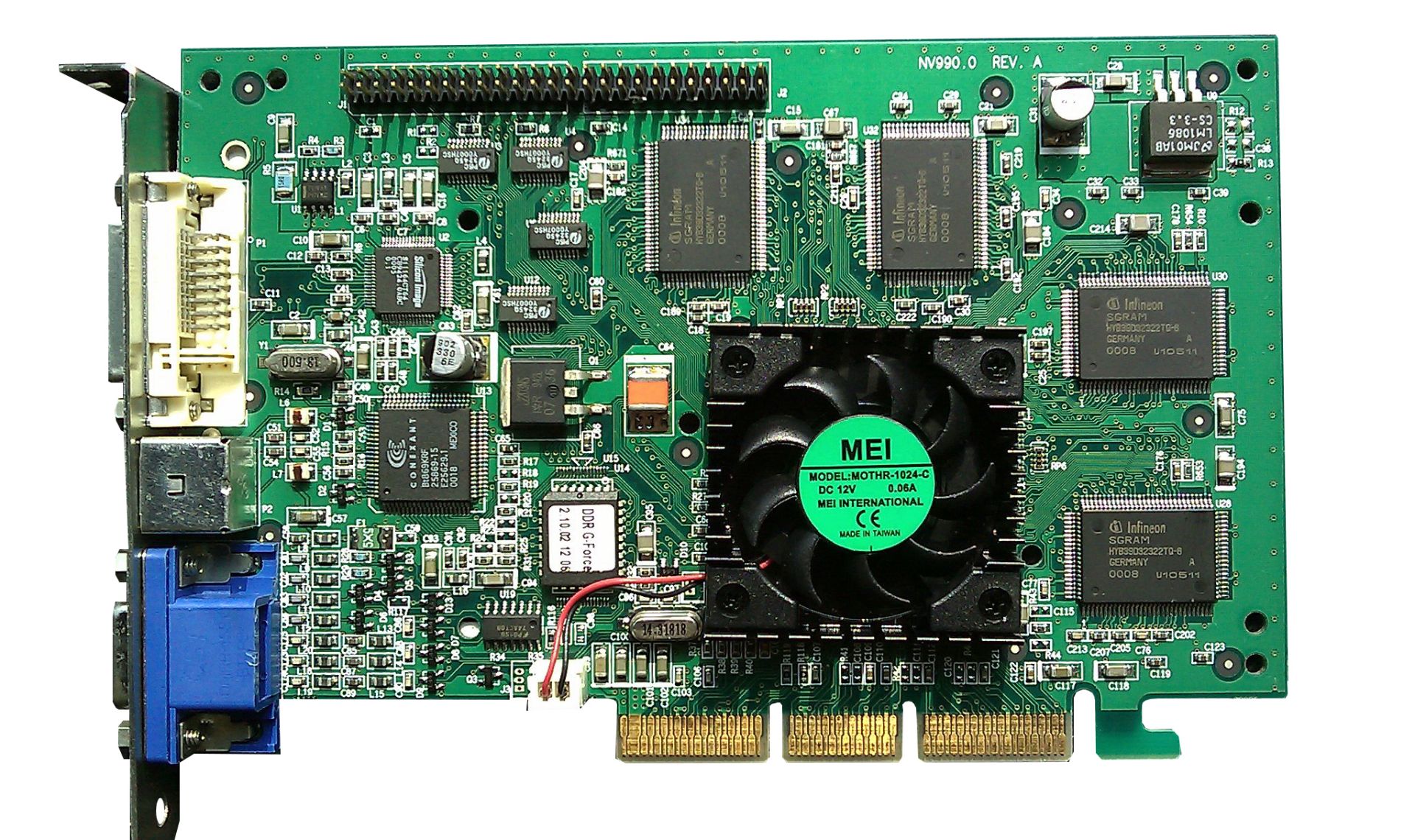

Since the graphics processor is a specialized unit for processing and designing computer graphics and has been optimized for this purpose, it performs this task much more efficiently than a central processor. This chip is responsible for most in-game graphics calculations, image rendering, color management, etc., and advanced graphic techniques such as ray tracing or shading are defined for it; On the other hand, the graphics card is a physical and hardware part of a computer systems that has many electronic parts on it.

The production technology of graphic cards has been accompanied by many changes from the past to today, and two or three decades ago these parts were known as display cards or video cards. At that time, graphics cards did not have the sophistication of today, and the only thing they did was display images and video on the screen. With the increase in graphics capabilities and the support of cards for various hardware accelerators to provide different graphics techniques, the name of graphics card was gradually used for these parts.

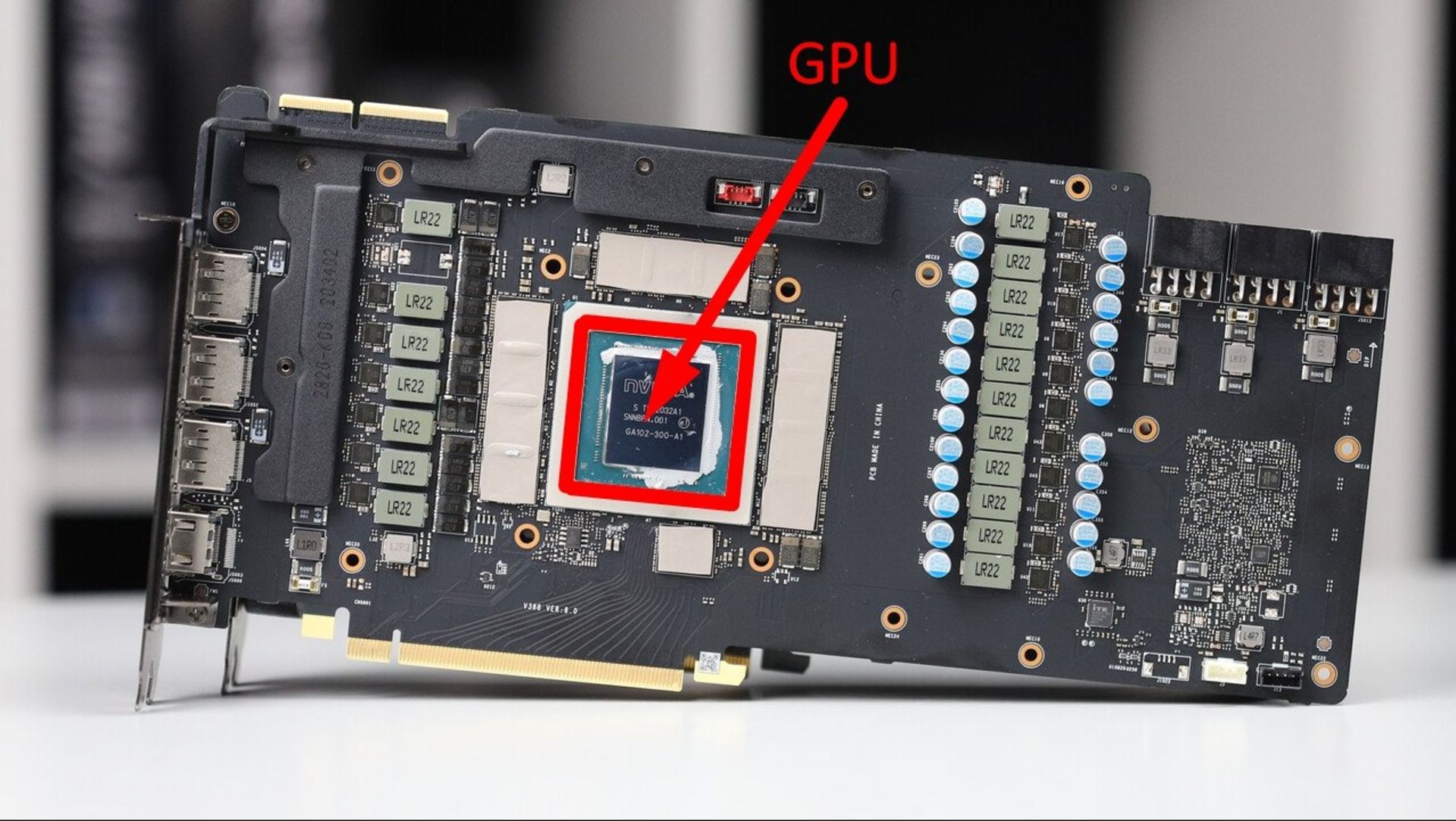

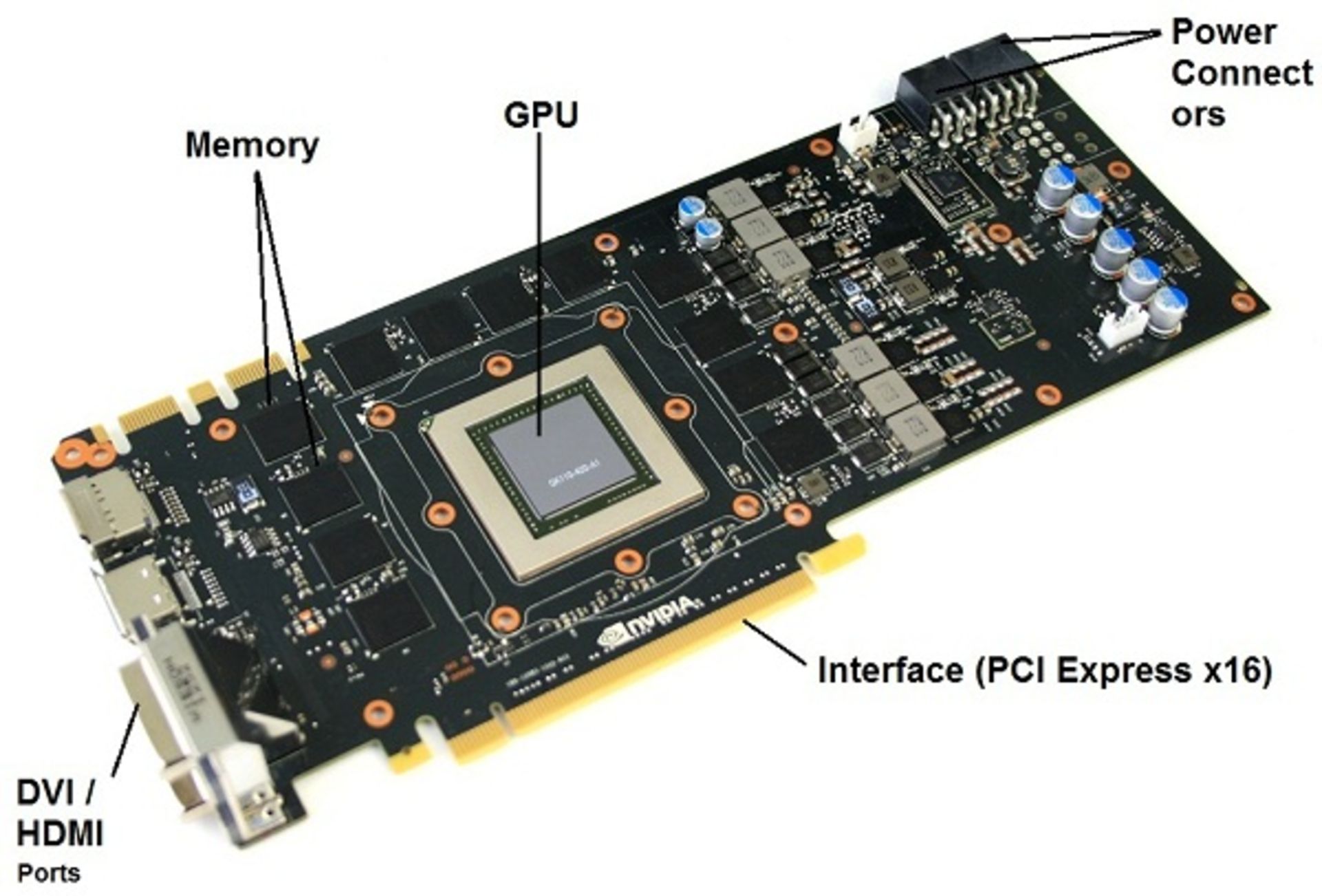

Graphics card components

Today, these parts are more powerful than before and may provide the system required for different purposes such as gaming with different technologies. Therefore, all graphics cards do not have exactly the same components, but the key parts are the same. To use this piece, you will need to install the appropriate graphics driver for your graphics card on the system; This driver contains instructions on how to recognize and operate the graphics card and determines various specifications for running various games and programs. In addition to the graphics processor, the graphics card is equipped with other parts such as video memory, printed circuit board (PCB), connectors and cooling. You can get to know these parts in the next picture.

Video memory

Video memory is a place to store processed data, which is different from RAM or GDDR. This unit can be offered in different capacities depending on the use.

printed circuit board

A printed circuit board (PCB) is a board on which graphics card parts are placed and may consist of different layers. The material of these boards will be effective in the work quality of the graphic card.

Display connectors

After processing and performing calculations, the data needs cables and display connectors to be displayed on the screen. These cables use different types of connectors depending on the type of use of the product. For example, HDMI and DVI ports with more pins are used to display 4K resolution and very high frame rates, and VGA port is used to display images with lower resolutions; Today, most graphics cards have at least one HDMI port.

Bridge

For some high-end graphics cards, it is possible to use them together with other high-end graphics cards. Such a feature (Bridge) is a parallel processing algorithm for computer graphics that is used to increase processing power and is shown in Nvidia graphics cards with SLI (abbreviation of Scalable Link Interface) and in AMD graphics cards with the term Crossfire.

- SLI was first used by 3dfx in the Voodoo2 graphics card line; After buying 3dfx, Nvidia acquired this technology but did not use it. In 2004, Nvidia re-introduced the name SLI and intended to use it in modern computer systems based on the PCIe bus; But using it in today’s modern systems required compatible motherboards.

- Crossfire was also a technology introduced by ATI that allowed the simultaneous use of multiple graphics cards for the motherboard. Due to this technology, a controller chip was installed on the main board, which was responsible for controlling the intermediary channels and integrating their information for display on the screen; Officially, up to 4 graphics cards can be installed as Crossfire, in which case it is called Quad-Crossfire. This technology was first officially introduced in September 2005 to compete with SLI.

Graphic interface

The slot connecting the graphics card to the motherboard or support base was called APG in the past. After APG, another interface called PCI was introduced, and finally, what is known today as the graphics card and motherboard interface is PCIe or PCI Express, which plays the role of connecting, powering the board, and transferring information for the graphics card. In fact, PCI stands for Peripheral Component Interconnect and means peripheral component interface. In 2018, the PCI-SIG consortium published the general objectives of the sixth generation PCIe communication port; Two years later, this port was released in 2020, while its fourth generation has not yet become widespread, and graphics cards for normal use do not use the full capacity of PCIe 3.0.

The PCIe 6.0 port can transfer twice as much data as PCIe 5.0, i.e. 256 gigabytes per second of data through 16 paths, without the need to increase the bandwidth or more working frequencies and using current methods, and it is also compatible with its previous generations so that it can be used Available from older cards in new ports.

|

PCI Express bandwidth based on data transfer rate per direction (GB/second/direction) |

||||||

|---|---|---|---|---|---|---|

|

Bandwidth slot |

PCIe 1.0 (2003) |

PCIe 2.0 (2007) |

PCIe 3.0 (2010) |

PCIe 4.0 (2017) |

PCIe 5.0 (2019) |

PCIe 6.0 (2022) |

|

x1 |

0.25 GB/s |

0.5 GB/s |

1 gigabyte per second |

2 gigabytes per second |

4 gigabytes per second |

8 gigabytes per second |

|

x2 |

0.5 GB/s |

1 gigabyte per second |

2 gigabytes per second |

4 gigabytes per second |

8 gigabytes per second |

16 gigabytes per second |

|

x4 |

1 gigabyte per second |

2 gigabytes per second |

4 gigabytes per second |

8 gigabytes per second |

16 gigabytes per second |

32 gigabytes per second |

|

x8 |

2 gigabit per second |

4 gigabytes per second |

8 gigabytes per second |

16 gigabytes per second |

32 gigabytes per second |

64 gigabytes per second |

|

x16 |

4 gigabytes per second |

8 gigabytes per second |

16 gigabytes per second |

32 gigabytes per second |

64 gigabytes per second |

128 gigabytes per second |

Voltage regulator circuit

After the initial power supply by the PCIe interface, the current to the graphics card must be reviewed and adjusted. This task is the responsibility of the voltage regulator circuit (VRM), which provides the electric current required by various parts such as memory and graphics processors. The correct operation and application of regular, adequate, and timely voltages of this circuit can increase the durability of the graphics card and optimize energy consumption. Actually, the voltage regulator circuit decides how the power supply should be done. This circuit consists of four sections: input capacitor, MOSFET, choke, and output capacitor.

- Input capacitors: current is entered and stored in the circuit through input capacitors and sent to other parts of the circuit when necessary.

- MOSFETs: MOSFETs act like a bridge and pass the current stored in the input capacitors. There are two low-side and high-side MOSFETs in the voltage controller circuit; When the graphics need current, this current passes through the MOSFET High and when the graphics do not need the current, the current is stored in the MOSFET Low.

- Chokes: Chokes are electronic components that reduce current noise as much as possible. Graphics need smooth and stable flow to function properly, and chokes provide this by removing noise.

- Output capacitors: after filtering the current by the chokes and before sending the required current to the desired sections, the output capacitors remove the current from the circuit.

Cooling system

Every graphics card must be at an optimal temperature to perform at its best. The cooling system in the graphic card, in addition to reducing the working temperature of the product, increases the durability of the parts used in it. The system consists of two parts: a heatsink and a fan: the heatsink is usually made of copper or aluminum and is ideally passive. The main purpose of this section is to take heat from the graphics processor and distribute it to the surrounding environment; On the other hand, the fan is an active part of the graphics cooling system that blows air into the heatsink to keep it ready to remove heat. Some low-end graphics cards only have a heatsink, but almost all mid-range and high-end cards are equipped with a combination of a heatsink and a fan for proper and efficient cooling.

Types of graphics processor

Graphics processors in different types perform the task of performing calculations and graphics processing for systems; In the following, we will get to know the types of these graphic units, how they work, and the advantages and disadvantages of each:

iGPU

iGPU (abbreviation of Integrated Graphics Processing Unit) is an integrated graphics processing unit that is placed on the central processor chip or CPU. iGPU may be installed on the motherboard or placed next to the processor (in which case it is considered the same graphics processing unit in the integrated chip). These graphics units generally do not have much processing power and are not suitable for displaying advanced 3D game graphics and animations; Actually, they are designed for basic processing and it is not possible to upgrade them. The use of these graphics allows the system to be thinner and lighter, reducing power consumption and costs. AMD introduces its graphics processors as APUs.

Of course, today there are modern processors that can be surprisingly powerful with integrated graphics. Not all processors are equipped with an integrated GPU; For example, Intel desktop processors whose model number ends with F or the company’s X series processors do not have a graphics processing unit and therefore are sold at a lower price, and you will need a separate graphics card for graphics processing in systems equipped with this processor. had

Currently, AMD and Intel are trying to improve the performance of their integrated graphics processors, and Apple has also surprised many people with its silicon chips, especially in the M1 Max chip, which is a very powerful integrated graphics processor and can handle high-end graphics. to compete

dGPU

dGPU (abbreviation of Discrete Graphics Processing Unit) is a separate graphics processing unit that is used as a dedicated and separate chip in systems. A discrete GPU is usually much more powerful than an iGPU and makes it much easier to analyze large, sophisticated, 3D graphics data. In fact, to use gaming systems or advanced 3D rendering and design, having a powerful dGPU is essential. The discrete GPU can be easily replaced and upgraded; In addition to very high power, these units are also equipped with a dedicated cooling system and do not overheat when performing heavy graphics processing. It can be said that dGPUs are one of the reasons why gaming laptops are more expensive and heavier, with high power consumption and low battery life in these systems compared to normal laptops, for this reason, it is recommended only if you use your system for gaming to produce 3D graphics content. Or you use heavy tasks, buy a separate graphics processor.

Currently, the biggest names in the discrete GPU industry are AMD and Nvidia, although Intel has also recently launched its own laptop GPUs in the form of the Arc series, and plans to launch desktop graphics cards as well.

However, discrete GPUs require a dedicated cooling system to prevent overheating and maximize performance, which is why gaming laptops are much heavier than traditional laptops.

Cloud GPU

The cloud graphic processing unit provides the user with the possibility of using many graphic services on the Internet; That is, without providing a GPU, you can use the processing power of the graphics processor. Of course, it is understandable that cloud graphics do not offer that much special power, but it is suitable for those who do not have a large budget and do not need very advanced graphics processing. This group of people can pay different providers for the graphics cloud processing they receive based on their usage.

eGPU

An external graphics card or eGPU is a graphic that is placed outside the system, is equipped with a PCIe port and a power supply, and can be connected to the system externally through USB-C or Thunderbolt ports. Using these graphics allows the user to use powerful graphics in a compact and light system.

-

Mac computers equipped with the M1 chip do not support external graphics cards

In recent years, the use of external graphics has increased, and since the power of graphics processing and the quality of output images of laptops are generally lower than desktops, users have recently solved this problem by using external graphics. External or external graphics are mostly used for systems such as laptops, but some companies use these graphics units for older desktops with low processing power. Be careful that if it is possible to upgrade the laptop graphics, the use of external graphics will not be justified, but issues such as the large space required for external graphics, their high cost, etc., make users especially gamers use external graphics cards. Prefer to upgrade your desktop graphics system.

Mobile GPU

Mobile graphics determine our visual experience of phones and can even be decisive for some users (gamers) and show loyalty to a certain brand.

The mobile system on a chip (SOC) or in short the same chip that is in today’s phones, besides the central processing unit, has units for artificial intelligence processing, image signal for the camera, modem, and other important equipment, as well as a graphics processing unit. The mobile GPU was introduced to process heavy data such as 3D games and changed the world of phones, especially for gamers. As mentioned, the processing cores in the mobile graphics processor are less powerful than the processing cores in the central processors, but on the other hand, their simultaneous and fast performance makes it possible to display heavy content and complex graphics on phones.

Types of mobile GPUs

ARM is one of the main poles of the production of graphics processing units for phones and the owner of the famous Mali brand, Qualcomm has a large share of the phone graphics market with Adreno graphics processors, Imagination Technologies has been producing Power VR graphics processors for years. and Apple used this company’s graphics processors for a long time before developing its own graphics processor. It is interesting to know that unlike Apple, which has its own graphics processors, Samsung uses ARM or Qualcomm graphics processors for the processing and graphics calculations of its phones.

- logo; Mali GPU: Mali mobile GPUs are developed by ARM and are sold in different price ranges. For example, the graphics processor used in the Galaxy S21 Ultra is Mali-G78 MP14 and can perform graphics processing at high speed and power.

- Qualcomm; Adreno graphics processor: Along with the powerful Android processors it produces under the name of Snapdragon, Qualcomm also performs brilliantly in the production of mobile graphics processors. Like Mali GPUs, these units have a wide price range and target market. For example, the Adreno 660 GPU used in the Asus ROG Phone 5 gaming phone in 2021 was recognized as one of Qualcomm’s most powerful graphics.

- Imagination Technologies; Power VR graphics processor: Power VR graphics processors were once used in the most popular iPhones, but Apple abandoned the use of these graphics units by producing its own graphics for the A Bionic chip; Today, Power VR GPUs are mostly used in affordable MediaTek chips and in budget and mid-range phones from brands like Motorola, Nokia, and Oppo.

Other applications of graphics processors

GPUs were originally developed as an evolved unit of graphics accelerators to help lighten the workload of processors. Until the last two decades, they were often recognized as accelerators for rendering 3D graphics, especially in games. However, since these units have high parallel processing power and can process more data than the central processing unit (CPU), they were gradually used in fields other than gaming, such as machine learning, digital currency mining, etc. In the following, we will learn about other uses of graphics processors other than gaming:

Video editing

Modern graphics cards are equipped with video encoding software and can prepare and format video data before playback. Video encoding is a time-consuming and complex process that takes a lot of time to complete with the help of the central processing unit. With their very fast parallel processing capabilities, GPUs can handle video encoding relatively quickly without overloading system resources. Note that high-resolution video encoding may take some time even with powerful GPUs, but if the GPU supports higher-resolution video formats, it will perform much better than the CPU for video editing.

3D graphics rendering

Although 3D graphics are most commonly used in video games and gaming, they are increasingly being used in other forms of media such as movies, television shows, advertisements, and digital art displays. Creating high-resolution 3D graphics, even with advanced hardware, just like video editing, can be an intensive and time-consuming process.